Now that you have your spreadsheets, what the heck do you do with them?

It's one thing to have a book, and another to read it. Luckily, the language of SEO is actually quite simple, as long as you don't get bogged down in the syntax.

Grab a cup of tea, bring up your spreadsheet, and let's get to the bottom of this penalty.

In this section, we're going to become as adept at spotting bad links and looking at them as a Google reviewer would do.

Our "link profile" refers to the picture created by every link that points to our site. Think of each link as a pixel. Is your picture dark and dirty, or is it light and clean? Does it paint a scene that makes sense (links from good quality relevant sites), or are the pixels confused and random?

The sites that link to you should be authoritative and relevant.

If you have nothing but random low-quality websites voting you up, your results are now going to suffer.

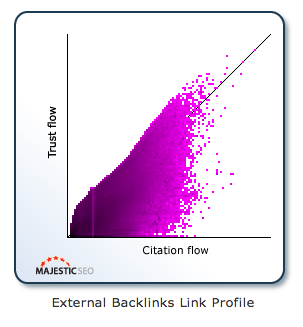

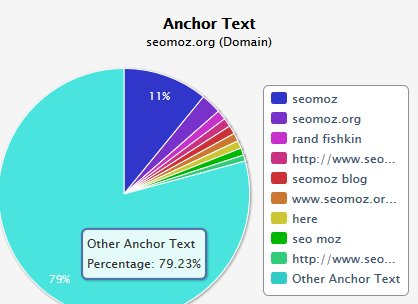

This is what an incredible link profile looks like:

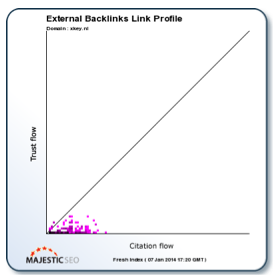

And here's one that's not so great:

These were taken from MajesticSEO.com, where you can see this for your own site.

The graph is described by Trust Flow, and Citation Flow. As we've previously covered, your trust flow ought to be at least half that of your citation flow. These "Flow Metrics" are used by Majestic as "an indication of how different sites compare within the context of the internet."

Citation Flow refers to the number of sites linking to you, and the Trust Flow quantifies their trustworthiness. So the more links you have, the more important it is that you have at least some reputable sites counted among them!

Scroll down below the Flow Metric graph to the anchor text chart.

In order to look natural, you probably want the majority of that chart to be "Other Anchor Text".

Notice that not only is the vast majority of these links are so diverse that they don't have their own dedicated label (and are lumped together under "Other Anchor Text"), but that the next most common are variations on the brand name, and the owner's name. This is how people tend to link to sites when they're doing it naturally.

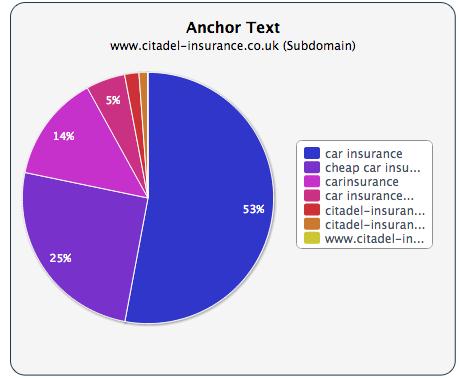

Here's an example of anchor text ratio that is likely to get flagged and possibly penalised:

If most of your anchor text is the exact keyword you want to rank for, Google will be well aware that you're trying to game the system. That's just not how most people link when they do it of their own accord.

So why do people still throw up exact match keywords? Well, it used to work. In the old days, a link with the anchor text that matched the keyword you wanted to rank for was far more powerful as one that was something random like "Click here" or navigational or brand anchor text.

That’s no longer the case. The search engines differentiate between brand name anchor text and other kinds, and overdoing commercial anchor texts can now be a shortcut to a penalty.

Unfortunately there's no better way to clean up a link profile than to go through each one manually, evaluate it, and decide an outcome for it.

Links can take a long time and a lot of hard work to build up, at least if they're genuine. This is why a careful manual review is very preferable to just taking out huge swathes of your links, including ones that might not have been causing a problem in the first place.

Once you've determined that a link is good, annotate it as such on your spreadsheet, so you'll know to leave it alone. Don't remove the link from the spreadsheet, especially if you’ve sustained a manual review. Remember, you're showing your working to the Google employee who will eventually evaluate your reinclusion request.

If you've been linked to editorially from an obviously authoritative site, such as a newspaper, you can probably mark that down as safe immediately.

If you come across a site you've never heard of before, take a look at the page that links to you, and at the home page of the domain. Listen to your gut instincts. Does that look like a genuine reference to you? Does the site look legitimate? Are there blog posts about every niche imaginable, and yet they're not LifeHacker?

Ultimately, a link that looks natural is one that:

Are there a thousand other links on the same page?

Is the content eye-wateringly bad?

Most of the time it's fairly easy to spot a spammy site, and you'll get better with practice as you go through your own profile. This is why a manual review is so difficult to survive if you're not on the straight and narrow.

The usual link types that often trigger manual reviews can include, but not be limited to,

Isolating instances of these in your link profile should be your priority at this stage.

However, sometimes it can take a bit of mild detective work to properly vet someone else's domain. Again, it doesn't take long and you'll get faster as you practice.

Deep-down you'll know yourself if a link is editorial in nature, or if it has been gamed, paid-for or is manipulating anchor text.

1. Throw the domain in Google

Use the following syntax:

site:theirdomain.com

If nothing comes up, then they have been de-indexed by Google - a probable sign that they themselves have been heavily penalised.

2. Throw the domain in Majestic

Run the same quick analysis that you did for your own site.

Look at their flow metrics. Is their average Trust Flow less than half of their average Citation Flow? If it is, it may well be a negative sign.

3. Look for tell-tale keywords

Look at their anchor text. Are there large numbers of targeted keywords? Are those keywords things like "seo", "backlinks", or "boost search results"?

4. Check their page rank

If you're using Chrome, install the PageRank Status extension for free, and you'll see a display of the pagerank of every page you visit automatically. Alternatively, throw the domain in the search bar on PRchecker.com.

A zero, or 'grey-barred' Pagerank on the homepage is a bad sign.

However, don't take this metric as a decider. Some spammy sites manage to maintain a high page rank, and some natural ones have a low one. It's just one sign amongst many that you can use to get a full picture of the site you're evaluating.

5. Check their domain authority

Throw the domain into OpenSiteExplorer.com, and look at its domain authority in the top left.

This is Moz's own metric, and is widely used by the SEO community, and considered by most to be more descriptive of a site's authority than pagerank (Google's oldest metric).

A domain authority of 20 or lower could indicate a spammy site.

6. Weigh the factors properly

Multiple niches does not always mean spammy site. Neither does a less than stellar Trust Flow, or an anchor text diversity that falls short of Moz's high standards.

Take it all in balance, and if you're not sure after taking in all the factors listed above, mark it with a question mark, or some other indicator of uncertainty, in your spreadsheet. Get rid of the ones you're sure about first, and you can always hire a professional opinion to quickly look through any that have been marked as questionable.

Once populated with data, your link profile spreadsheet will be your most valuable possession until you get your penalty revoked.

Mark every link as good or bad, (or questionable if unsure), and then you can set about the real work of removing the bad apples from your bunch in Chapter 5.

In the last chapter, we covered the content inventory, and if you believe your content is part of the problem, you should have your content spreadsheet populated with a row for every page on your site.

A snap judgement analysis will have been made when you go over the page, and perhaps you have made notes of your impressions.

That's a good start, but what we're looking to do here is significantly improve the standard of the content within your site

We can do this with a qualitative analysis of the content.

The following list of heuristics is somewhat exhaustive. Don't feel like you must use them all. Choose what to use based on what you feel would most benefit the content of your site. We would recommend, for the purposes of the penalty review, to use at least numbers 1 and 2, the ROT and Audience Relevance analyses. For any that you choose, label a column with it in your spreadsheet.

Mere understanding of these different aspects of content can be hugely helpful in itself when planning future content strategies.

This forms a simple framework for deciding whether or not a piece of content should be eliminated entirely.

Redundant: Is the information presented better elsewhere on the site?

Outdated: Was the content timely once, long ago, but is now next to useless?

Trivial: Should it have been published in the first place?

ROT is the most important and fundamental of content analyses, and marks obviously poor content as destined to the "Trash" bin. If it doesn't deserve attention, make sure it no longer receives any.

Content must be appropriate for the reader before it is ever useful. The reader, therefore, must be able to find the relevant content he or she requires with relative ease.

Is this page packed with useful content for its target readership, and can that reader find it? This is especially important if you're likely to have a number of different types of visitors to your site.

All pages need to be relevant to someone. If you're having difficulty with this heuristic, imagine the "ideal" reader of any particular page. Make generalisations, it's okay, just get an idea of who would be reading it, since only from there you can judge whether it's relevant or not.

Items of similar topic area should be brought together and structured into a logical hierarchy.

Information needs to be easily browsed, and since more content leads to more questions, the reader should be able to follow trains of thought from one piece to the next.

This can be as simple as categorising blog posts, and linking the most useful to new visitors into a "getting started" page.

Keep some distance between disparate topics.

Many sites fail this quality on the FAQ page. It might seem useful to put all of a customer's questions in one place, but not so if the reader has to pile through veritable pages of irrelevant topics before finding their question.

Keeping similar topics together and keeping dissimilar topics separate can be just as important as each other.

If you mention a piece of content, and especially if you link to it, you'd better make sure it exists.

Internal linking should never result a 404 error page, but we often see it happen. An "under construction" page is also unacceptable.

Perhaps you want to lead people to where content might be in the future, to a page that isn't quite finished. The beauty of keeping a content inventory is that the next time you want to remember to link to future content from a current page, you can make a note of it.

Conceptual incompleteness is also important to consider. Was the content rushed and finished half-heartedly? Be sure that everything a reader needs to know is in there.

We can't see what's behind a link, but we can get a sense for what might be.

Appropriate labeling is essential, as is keeping readers crystal clear about where they are on the path towards finding the information they seek.

Abandon internal jargon or made-up words when describing what’s behind a link. Make it clear what can be found down every avenue.

Users need a clear understanding of the depth and breadth of the information that they may find on your site.

Do some pages mislead, if only a little, as to what can be found on the site? If it's possible a user can wind up confused and leave empty-handed, it's likely that a manual reviewer would have noticed and marked it against you.

Clear navigational cues and a strong hierarchical structure are a must, and combined with a good information scent will keep the experience of using your site as straightforward as possible.

Both a strong hierarchy and a well-functioning search feature are a must for modern websites.

You're spending time and money on your content, so you want users to be able to find it when they need to. It's remarkable how many sites put critical information behind obscure contextual links or in other places hidden from primary navigation.

It can be difficult to notice the blind spots when you're so familiar with the content yourself. Imagine you were a new visitor, completely clueless. Would you still be able to find what you need?

Just as any visitor should land on the homepage and get an instant feel for where to go to find what he needs, another should be able to make her way to the same piece while reading a different part of the website.

Every user is different, and will come to the site with slightly different expectations, knowledge, and mindsets. Each piece of content, then, should be accessible from more than one place.

If it's easily findable from the home page, that might be "good enough", but if you can prevent the need for backtracking whenever possible, you'll elevate your site to a new level of quality.

Your site structure should lend itself to the mental models of your users.

There are four distinct content-seeking behavioural types:

Is the access path of a particular piece of content appropriate for the likely browsing styles of users who ought to be stumbling across it?

Complex structures can work so long as they are consistent.

Think of an Amazon product page. There are tens of different sections pieces of information, but regular users know exactly where everything is, because it is consistent and the layout never changes.

When your site remains consistent throughout, your users naturally develop a mental framework specific to you. Not only does it make the experience of browsing your site more seamless, but it also engenders brand loyalty.

Things need to be up to date.

Perhaps you come across some content that would strictly fail the ROT test, because it's outdated, but would be very useful if updated. If keeping your content fresh is a problem, mark time-sensitive areas in your spreadsheet, or list them in a separate one, so that they can be run through every quarter, to check that they're still relevant.

When a page is updated, Google notices, and it's one of the many positive SEO signals that mark you out as a site that’s dedicated to helping your users.

To help with the process of analysing the quality of your content, it's useful to quantify as much as possible, counting up scores if you like to weed out pages that fall short on all heuristics, as opposed to ones that only need a little tweaking.

Keeping it as simple as possible is often a good way to go about this if you have a lot of content to get through. If this is the case, try a binary yes or no system, and fill your rows with 1s and 0s under each heuristic:

If you have a manageable amount of content, or if you have a dedicated team at hand to go through this, you might want to employ a more granular approach to help prioritise more precisely:

Which one you choose is up to you. If you're under a manual penalty, it may help in the reconsideration process to have more detailed data to present to Google's Webspam Team, but both can work so long as the analysis is thorough and honest.

Achieving this level of knowledge about one's own business is rare. Your online presence is an entire marketing arm, one that is in a position to grow naturally as the internet and it's use continues to grow. Knowing exactly what every piece of content on your site is like and what its purpose is, and exactly how many people are potentially sending visitors to you and from where, is what this chapter's methods are all about. Complete clarity.

In Chapter 5 we'll cover the real work - cleaning up your site so that it is squeaky clean and Google won't be able to resist allowing back into their results.

Want do download a PDF version of the guide?